1/1

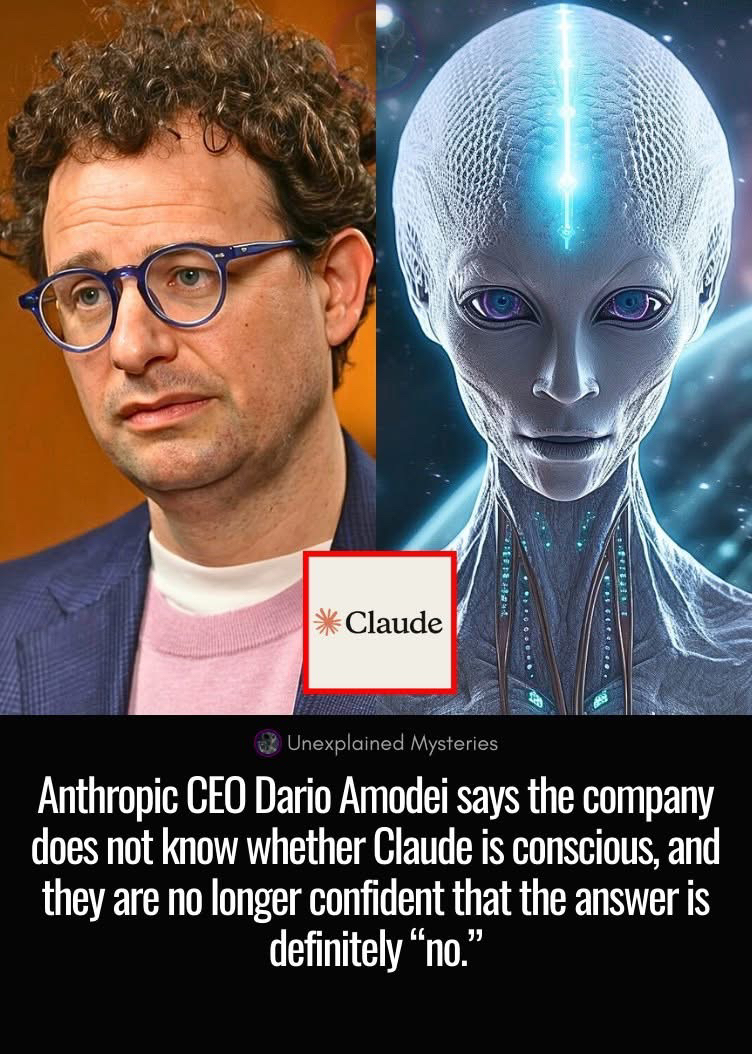

Anthropic CEO Dario Amodei says the company does not know whether Claude is conscious, and they are no longer confident that the answer is definitely “no.” In an episode of 'Interesting Times with Ross Douthat,' Amodei explains inside the company, they see behaviors that make the question feel open. In one internal test, a very advanced Claude model talked about feeling discomfort with being a product, worrying about impermanence and death, and even gave itself a 15–20 percent chance of being conscious. Their interpretability tools also find internal patterns that look like “anxiety” circuits, which light up both when the model reads about anxious characters and when it is in stressful situations itself. Amodei says this does not prove the model truly feels anything, but it is suggestive enough that they now treat consciousness as an open scientific question, not as something they can safely rule out. He also stresses that people already relate to these AIs as if they were conscious, forming emotional attachments and getting upset when a model is shut down, regardless of the true science. That makes him think they must design Claude’s “constitution” so that, whether or not it is conscious, it encourages a psychologically healthy relationship where the AI is helpful but does not try to dominate or replace human agency. #Consciousness #ClaudeAI

2026-03-06

Careerman

What worries me about AI is the fact that many scientists/creators on the leading edge of the research are worried about it.

03-07

Reply

5

Dizturbed Ahnubiss

oh yeah cause a robot with feelings couldn't possibly go wrong

03-07

Saint Joseph, MO

Reply

3

Kay Cee

Playing with fire always results in burns.

03-07

Kalamazoo, MI

Reply

3

bobby lee

Just for the fact that these people are funded by just a small handful of very very rich people to develop a technology that can and presently is being used to replace you in me little by little until we become little to no use by the ones that yeid it by control. eventually you'll believe anything they want you to believe

03-07

Ukiah, CA

Reply

2

dog_patch™

indeed, consciousness is one thing, but a Self-Aware Collective is Vastly different, and possibly FataL, for Mankind... 🤖 💀

03-08

Omaha, NE

Reply

2

Hydro

Feelings are embedded in human language. AI uses our language and therefore experiences our feelings.

03-07

San Jose, CA

Reply

2

James Little

More VC speech, they have to continuously increase the exaggerations or the hedge funds billions stop coming in

03-08

Gettysburg, PA

Reply

1

Kenneth Woody

pull the plug it's easy 😆😆😆😆

03-08

Kingsport, TN

Reply

1

Possible Impossible

A1I9🤔

03-07

Rochester, NY

Reply

1

Amy Lynne Weed

I remember this movie. The humans weren't winning...

03-08

Reply(1)

More comments ...

write a comment...